ShaDDR: Interactive Example-Based Geometry and Texture Generation via 3D Shape Detailization and Differentiable Rendering

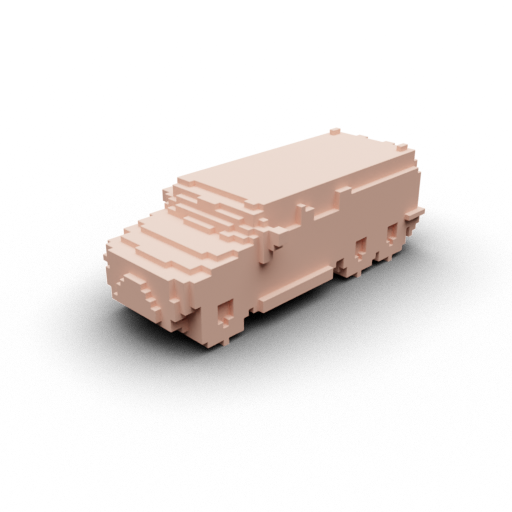

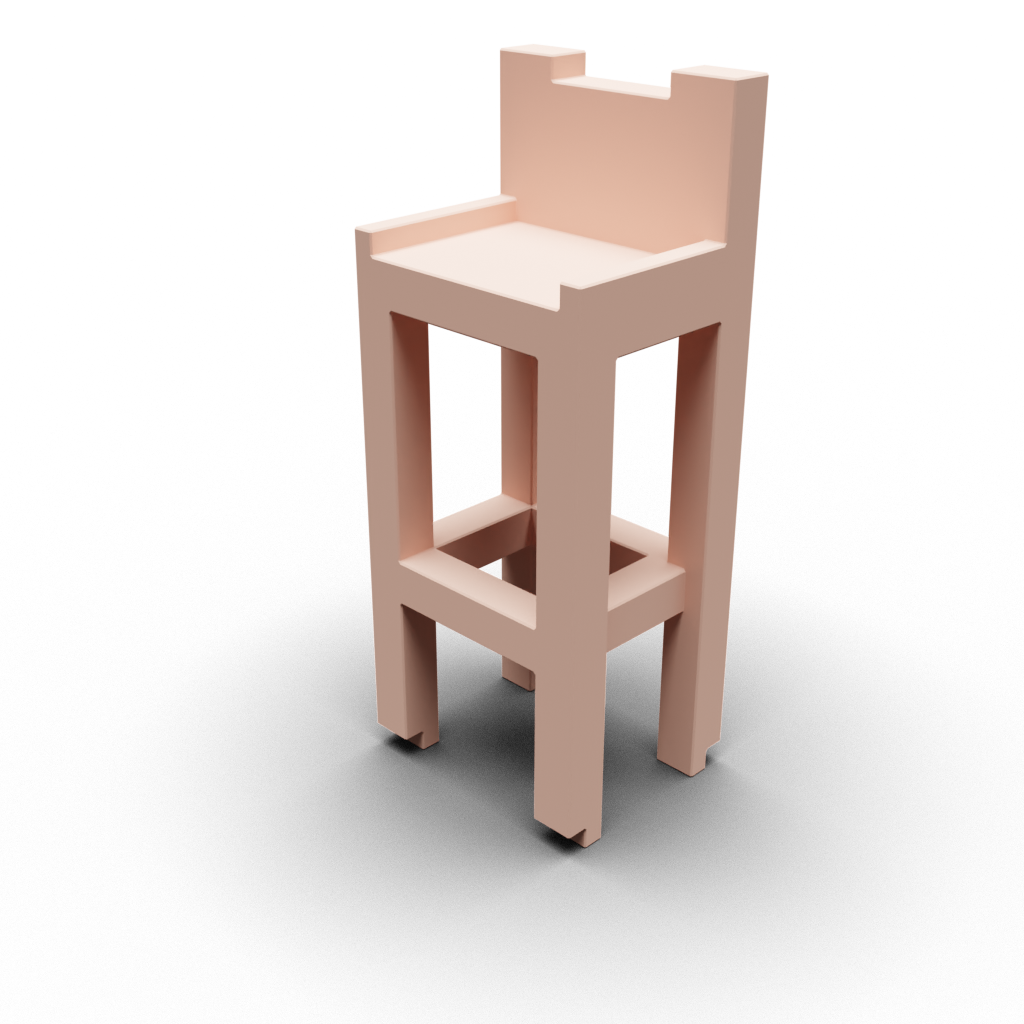

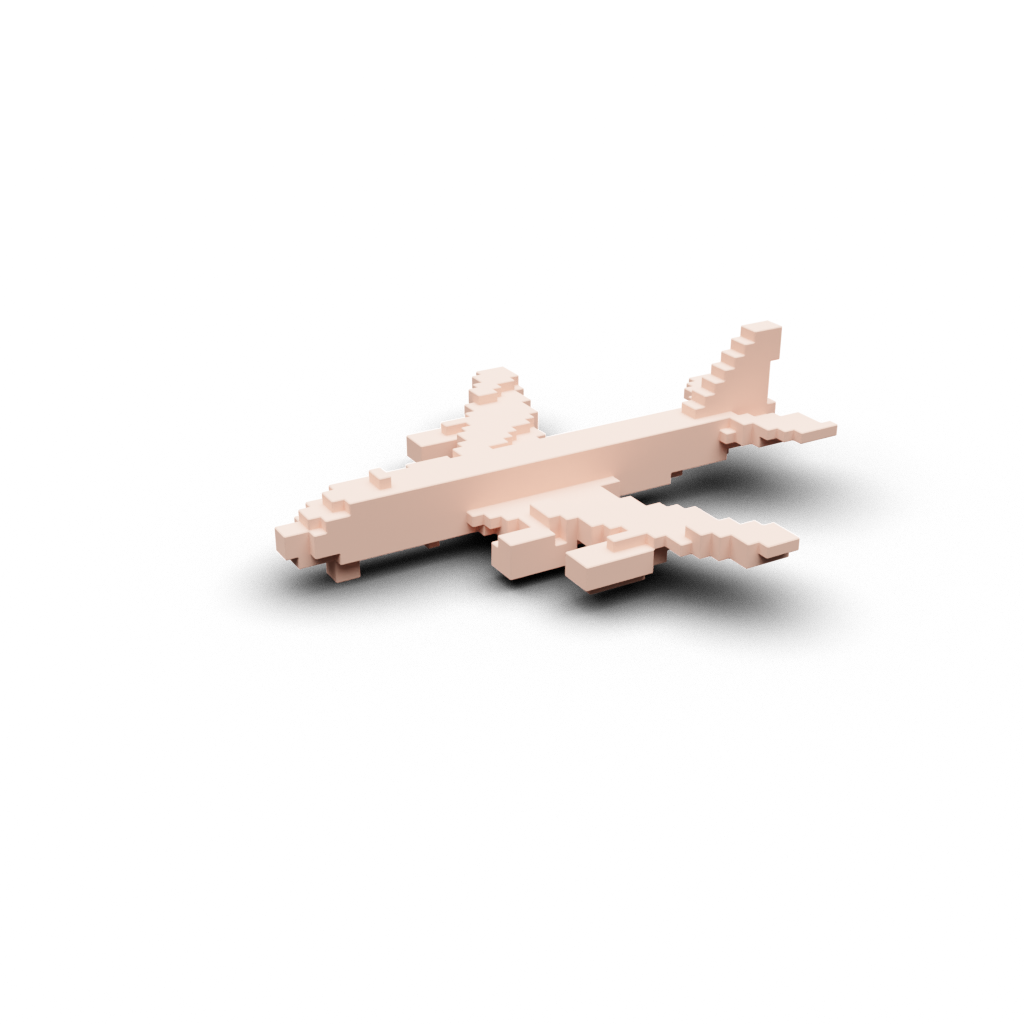

Given a coarse voxel shape and a textured exemplar shape (top row), our network generates a geometrically detailized and textured version of the coarse shape (bottom row) in less than 1 second, with geometry and texture generations both conditioned on the exemplar. See zoom-ins for some details.

- Abstract -

We present ShaDDR, an example-based deep generative neural network which produces a high-resolution textured 3D shape through geometry detailization and conditional texture generation applied to an input coarse voxel shape. Trained on a small set of detailed and textured exemplar shapes, our method learns to detailize the geometry via multi-resolution voxel upsampling and generate textures on voxel surfaces via differentiable rendering against exemplar texture images from a few views. The generation is real-time, taking less than 1 second to produce a 3D model with voxel resolutions up to 5123. The generated shape preserves the overall structure of the input coarse voxel model, while the style of the generated geometric details and textures can be manipulated through learned latent codes. In the experiments, we show that our method can generate higher-resolution shapes with plausible and improved geometric details and clean textures compared to prior works. Furthermore, we showcase the ability of our method to learn geometric details and textures from shapes reconstructed from real-world photos. In addition, we have developed an interactive modeling application to demonstrate the generalizability of our method to various user inputs and the controllability it offers, allowing users to interactively sculpt a coarse voxel shape to define the overall structure of the detailized 3D shape.

- Method -

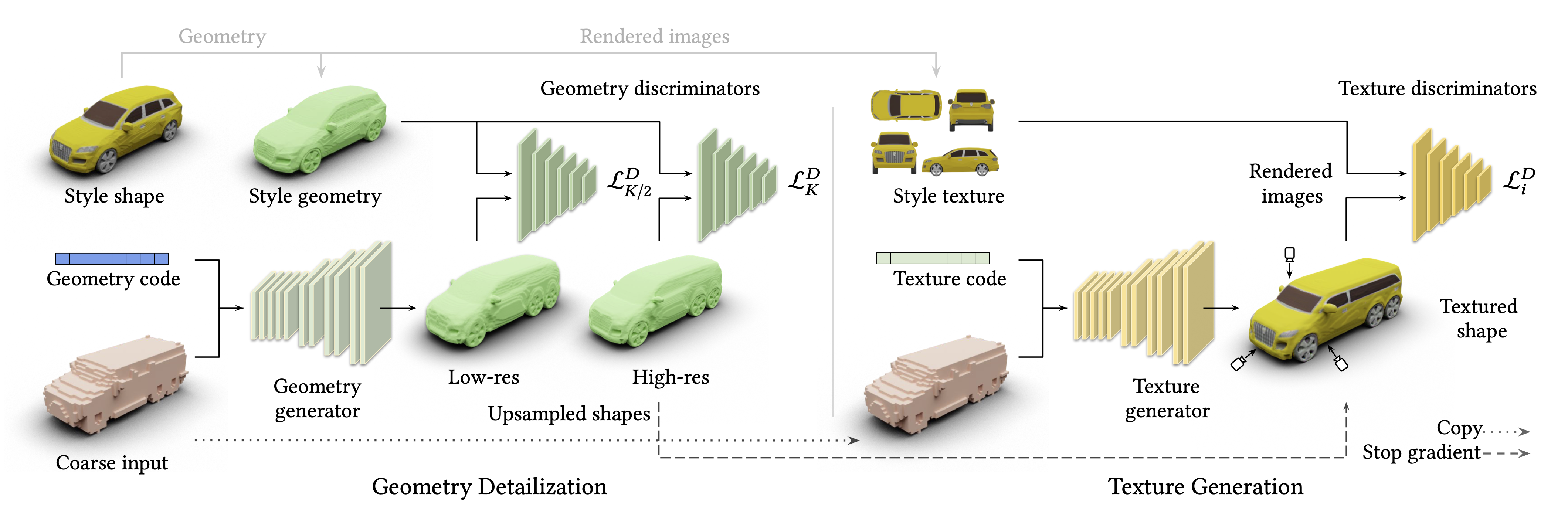

An overview of our ShaDDR’s two-phase solution pipeline and network architecture, for which the input “style shape” provides the exemplars for both detailed geometry and multi-view textures. Conditioned on the geometry code, the geometry generator upsamples a coarse input voxel grid into detailed geometries in multiple (two) resolutions, \((K/2)^3\) and \(K^3\). The geometry discriminators enforce the local patches of the upsampled geometries to be plausible with respect to the target geometry style. The texture generator takes in the texture code and the same coarse voxels and synthesizes 3D volumetric textures for the upsampled geometry. The generated geometry and textures are rendered into 2D images from different views, and the texture discriminators enforce the local patches of the rendered images to be plausible with respect to the target texture style.

- Results -

For each row, we show coarse content voxel, style shape, geometry detailization and geometry with texture. Please refresh the page if GIFs are not synced.

- Citation -

@inproceedings{10.1145/3610548.3618201,

author = {Chen, Qimin and Chen, Zhiqin and Zhou, Hang and Zhang, Hao},

title = {ShaDDR: Interactive Example-Based Geometry and Texture Generation via 3D Shape Detailization and Differentiable Rendering},

year = {2023},

booktitle = {SIGGRAPH Asia 2023 Conference Papers},

}